Playing a ball game and moving furniture may seem like two completely different tasks, but they have a couple of things in common. First, it’s easier to do it together. Then, these seemingly undemanding tasks actually require a subtle combination of strength, moderation and synchronisation. Try playing with a ball or moving a table with a robot, and you’ll see how difficult this actually is.

There is one humanoid robot, however, that might soon be able to overcome this difficulty. COMAN+ – an evolution of the COMAN humanoid developed under the earlier FP7 AMARSi project – is presented by its creators as a cheaper, robust and versatile humanoid capable of executing everyday tasks.

Prof. Dr Jochen Steil, coordinator of CogIMon (Cognitive Interaction in Motion), discusses the most important project outcomes shortly before its completion in May.

What type of collaborative human behaviours did you aim to replicate with this project and to what end?

We focused on behaviours that require the understanding of applied forces and the implicit communication they require.

These include joint manipulation of objects (carrying tables, joint lifting of heavy objects) or mutual throwing and catching, because in these situations, forces are estimated from the movement. Another example is the force coupling of joysticks in a game, where players implicitly learn from each other through force-based communication.

How could mastering these behaviours be a game changer for industry?

Understanding forces is fundamental for physical robotic assistant systems, which actively support the human through regulating their strength according to human capabilities and the task at hand.

This goes beyond using compliance for safety only: it allows for more sophisticated and human-friendly action, which will be a must for the next generations of assistive robotics. Humans’ applied forces display large variations even in repetitive tasks and therefore a ‘one-size-fits-all’ controller will not fit the bill in physical interaction. Assistance robots will need to actively control their strength for smooth, ergonomic and effective interaction.

Why did you focus on the COMAN robot specifically?

Humanoid research is the decathlon of robotics: it requires the integration of many fields and different expertise to tackle one big technological and scientific challenge that promises major advancements. It is a fascinating and demanding field that naturally attracts many best-in-the-class researchers and requires joint efforts, notably under EU-funded initiatives.

COMAN is one of these European success stories whose development was significantly funded through EU research frameworks. It is torque-controlled and features springs in its body, so that it can act safely in human-robot interaction and actively regulate its full-body compliance. With its advanced actuation technology, it is one of the first and best humanoid robots to do so.

This story continues in CogIMon through the development of COMAN+, the next generation of humanoid robots that will be more robust, cheaper, versatile and capable of executing everyday tasks.

What would you say were the project’s most important achievements?

There are both scientific and technological achievements. Scientifically, CogIMon advanced the current understanding of human interaction in motion, which is mostly driven by our partners in human motion science. This has led to new models of how humans learn to control forces in interaction, which are now implemented in robot controllers as well. Besides, the state-of-the-art in compliant control for humanoids, multi-arm and multi-leg systems was strongly advanced.

Technology-wise, the scaled-up humanoid robot COMAN+ strengthens the world leading position of European research in the development of variable impedance actuation and compliant humanoid robots. We have also developed engineering tools for simulation and control of such robots and made them open source. Finally, we have created the technology to run robot controllers in VR and open new avenues for mixed-reality applications.

How did you proceed to demonstrate these technologies?

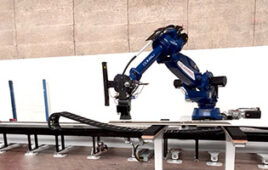

CogIMon has demonstrated for the first time two humanoid robots carrying objects together. The project has also shown how four compliant robot arms can collaborate to lift and move a heavy object in interaction with a human, devised new methods for soft robot catching, and created workpieces which have been finalists twice for the Kuka Innovation Award at the Hannover fair. Finally, we have developed a very promising application in physiotherapy, where virtual reality and robot control are combined to enable ball-catching training for patients.

We are going to demonstrate COMAN+ and these applications to the public and scientific community in the upcoming ICRA exhibition.

What’s been the feedback from industry so far?

Most of CogIMon’s work is rather fundamental and our humanoid robots are still far from making it into industrial applications. There is a lot of interest, but little direct feedback from concrete use cases.

However, successful participation in the innovation awards and the demonstration of advanced algorithms for compliance control have generated a lot of attention. The actuation units developed for COMAN+ are currently commercialised, and the first evaluation studies with real patients are being conducted for our physiotherapy application. The VR-robotics mixed-reality approach has also resulted in a new collaboration with an SME.

What are your follow-up plans?

We will focus on applications in healthcare and physiotherapy, and ergonomics, to further develop the combination of VR and robotics and enable safe physical interaction for training. This requires further advances in both hardware and engineering tools to allow for more coherent and systematic but flexible application development.

Follow-up research will also concern multi-robot applications, as well as the control of humanoid robots in everyday tasks. The humanoid robotics decathlon will certainly continue in the years to come.

Filed Under: Virtual reality, Product design, Robotics • robotic grippers • end effectors