If you haven’t heard, Tesla CEO Elon Musk is not a LiDAR fan. Most companies working on autonomous vehicles – including Ford, GM Cruise, Uber and Waymo – think LiDAR is an essential part of the sensor suite. But not Tesla. Its vehicles don’t have LiDAR and rely on radar, GPS, maps and other cameras and sensors.

“LiDAR is a fool’s errand,” Musk said at Tesla’s recent Autonomy Day. “Anyone relying on LiDAR is doomed. Doomed! [They are] expensive sensors that are unnecessary. It’s like having a whole bunch of expensive appendices. Like, one appendix is bad, well now you have a whole bunch of them, it’s ridiculous, you’ll see.”

“LiDAR is lame,” Musk added. “They’re gonna dump LiDAR, mark my words. That’s my prediction.”

While not as anti-LiDAR as Musk, it appears researchers at Cornell University agree with his LiDAR-less approach. Using two inexpensive cameras on either side of a vehicle’s windshield, Cornell researchers have discovered they can detect objects with nearly LiDAR’s accuracy and at a fraction of the cost.

The researchers found that analyzing the captured images from a bird’s-eye view, rather than the more traditional frontal view, more than tripled their accuracy, making stereo camera a viable and low-cost alternative to LiDAR.

Tesla’s Sr. Director of AI Andrej Karpathy outlined a nearly identical strategy during Autonomy Day.

“The common belief is that you couldn’t make self-driving cars without LiDARs,” said Kilian Weinberger, associate professor of computer science at Cornell and senior author of the paper Pseudo-LiDAR from Visual Depth Estimation: Bridging the Gap in 3D Object Detection for Autonomous Driving. “We’ve shown, at least in principle, that it’s possible.”

LiDAR uses lasers to create 3D point maps of their surroundings, measuring objects’ distance via the speed of light. Stereo cameras rely on two perspectives to establish depth. But critics say their accuracy in object detection is too low. However, the Cornell researchers are saying the date they captured from stereo cameras was nearly as precise as LiDAR. The gap in accuracy emerged when the stereo cameras’ data was being analyzed, they say.

“When you have camera images, it’s so, so, so tempting to look at the frontal view, because that’s what the camera sees,” Weinberger says. “But there also lies the problem, because if you see objects from the front then the way they’re processed actually deforms them, and you blur objects into the background and deform their shapes.”

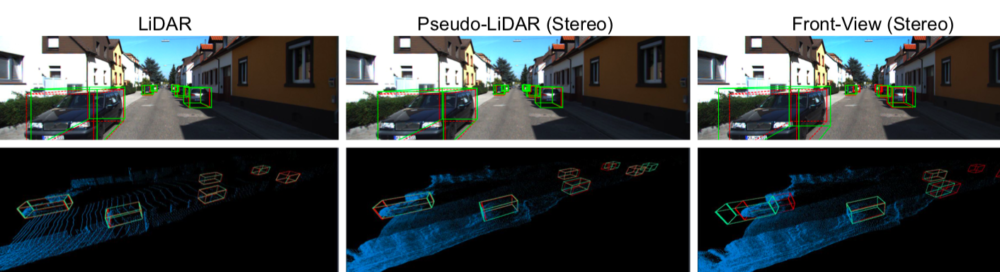

Cornell researchers compare AVOD with LiDAR, pseudo-LiDAR, and frontal-view (stereo). Ground- truth boxes are in red, predicted boxes in green; the observer in the pseudo-LiDAR plots (bottom row) is on the very left side looking to the right. The frontal-view approach (right) even miscalculates the depths of nearby objects and misses far-away objects entirely.

For most self-driving cars, the data captured by cameras or sensors is analyzed using convolutional neural networks (CNNs). The Cornell researchers say CNNs are very good at identifying objects in standard color photographs, but they can distort the 3D information if it’s represented from the front. Again, when Cornell researchers switched the representation from a frontal perspective to a bird’s-eye view, the accuracy more than tripled.

“There is a tendency in current practice to feed the data as-is to complex machine learning algorithms under the assumption that these algorithms can always extract the relevant information,” said co-author Bharath Hariharan, assistant professor of computer science. “Our results suggest that this is not necessarily true, and that we should give some thought to how the data is represented.”

“The self-driving car industry has been reluctant to move away from LiDAR, even with the high costs, given its excellent range accuracy – which is essential for safety around the car,” said Mark Campbell, the John A. Mellowes ’60 Professor and S.C. Thomas Sze Director of the Sibley School of Mechanical and Aerospace Engineering and a co-author of the paper. “The dramatic improvement of range detection and accuracy, with the bird’s-eye representation of camera data, has the potential to revolutionize the industry.”

Filed Under: Automotive, The Robot Report

In a life or death scenario redundancy is essential. Lidar will get incredibly cheaper once in full production. Leaving out p e level of redundancy is unwise. Elon knows this he just is being unduly cheap. He wants to move now rather than wait till lidar is dirt cheap. Guideway is even better at keeping the public safe.

It seems like the stereo camera is the best cost choice for the short-medium future, but LiDar cost will almost certainly come down in the future, correct?

What about the issue of light illuminating objects in the dark, or near dark for stereo cameras? isn’t that a significant drawback?

How about the potential for error in a situation where one or more of the cameras is momentarily or gradually blocked (tree branch, or dead bugs) ?

In my opinion the focus on centralizing sensing and computing in each individual auto is the actual fool’s errand, with a nod to Mr. Musk. Better to partly rely on V to V and V to I infrastructure. One could then blend sensor information from multiple vehicles and from the infrastructure itself, increasing redundancy and reducing the distance each vehicle would need to look ahead reliably. Also in my opinion, better to treat at least the high traffic portions of the road network like an Internet analog, with infrastructure computers as routers and vehicles as packets to be routed efficiently to destinations. Such infrastructure could also define and enforce guideways as mentioned above to reduce the navigation load on each vehicle computer and reserve roadway space for other uses such as bike lanes. This would also solve multiple problems such as: 1) How to handle road and other construction. Instead of forcing vehicle AI to figure it out, routers could be programmed with an appropriate guideway alteration and other parameters such as speed reduction or stops based on work crew signals; 2) The need for extreme mapping accuracy and detail required to support existing self driving solutions and thus potential failures as maps inevitably become out of date; 3) How to handle failures in poorly maintained personal vehicles — by relying on other vehicle sensors and guideways such a vehicle could be safely moved to a place where it could be repaired.

The biggest issue with LIDAR today is very low resolution (IE large pixel size) limits ability to ability to detect and classify relatively small objects like people, children, bicycles, etc inside breaking distance. I have to agree with Tesla/Musk on this big time.

https://www.eejournal.com/article/maybe-you-cant-drive-my-car-yet/

In addition to the cameras, which will always be limited by their limited dynamic range versus direct sunlight, a single forward looking radar as a redundant reality check, just to make certain that the cameras are not missing anything. And an IR camera as well. Redundant systems looking at alternative spectra. But it will not matter because the code will never be right.

The LIDAR Uber is using has a typical left/right angular resolution of about 0.1152 to 0.4608 degrees over a 360 degree field at 10 fps. The typical up/down angular resolution for LIDAR is however about 0.4 degrees over a narrow 26.8 degree field. The LIDAR can double it’s left/right angular resolution dropping to 5fps, or decrease it by 50% at 15fps. The human eye has left/right/up/down angular resolution of about 0.02 degrees at something faster than 12fps, up to a best case perception of around 90fps for certain trained activities. All said and done, the human eye is something 100x better than this LIDAR for normal things, and as much as several orders of magnitude for specialized events.

Why is this important? It comes down to how many pixels does it take to recognize an object. At 100ft a single pixel is about a 6″ square with a 0.28 degree angular resolution, and a 0.5″ square at your eyes 0.02 degree angular resolution. 144 more pixels. The 0.4 degree vertical resolution of Ubers LIDAR increases pixel size by another 30%, giving human scale perspection about 200 pixes for every Uber LIDAR pixel.

Why is this LIDAR sensor resolution important?

At 45mph it takes about 100 feet to stop a car. A small child facing head on to the LIDAR angular resolution will be less than a dozen pixels at 100ft, and probably not recognizable unless perfectly aligned within a very tight pixel mask … 3″ to either side, and the limbs will blur into two pixels, and present a blob likely to blend into the background image. If the child is sideways, there are not enough pixels.

The higher the LIDAR is on the vehicle, the worse the problem becomes trying to detect children, as the geometry between the child and the background shrinks, and easily makes the background more dominate if noise filters are active.

Now is that safe?

Uber and the police did not release the LIDAR images for the woman killed by Uber.

But taking a guess, the bicycle will not even show up on the LIDAR because the cross section of the tires, spokes, frame, etc are all a tiny fraction of the pixel resolution and were probably blurred into the background return signal since there cross section is so minimal … effectively transparent at this resolution.

The woman was sideways to the LIDAR with minimal cross section, and only a few pixels wide/tall at the stopping distance of the car.

This is where the human eye’s pixel resolution makes all the difference between safe, and the poor prototype that self driving car developers want the public to believe is safe.

The Tesla/Musk solution with higher resolution stereo video is certainly better, but still way short of human perception.

Quote from the previous NYTimes article, and driver time assume control: “Experiments conducted last year by Virginia Tech researchers and supported by the national safety administration found that it took drivers of Level 3 cars an average of 17 seconds to respond to takeover requests. In that period, a vehicle going 65 m.p.h. would have traveled 1,621 feet — more than five football fields.”

This is what killed the Tesla driver last year, that died shortly after giving the Tesla control.

Our brains process images AND rational decisions at a significantly higher rate than the feeble AI in these cars.

Unless someone is a psychopath, they will make critical decisions that include restrictions not to harm other people when multiple choices are presented to avoid dangerous situations. The computer in the car probably doesn’t consider such ethical issues when navigating to avoid a collision.

When people ask how safe are self driving cars, I generally say about as safe as a geriatric psychopath with cataracts and dementia, because when it’s all said and done, that is about what their skill level is.

No mater how you slice it, any sane engineer know that 90% of basic functionality takes the first 10% of the project development cycle. Finishing the project to near 99% functionality will take another 90% of the development cycle. The last 1% will take another 3x of the 99% schedule.

So given that self driving cars are maybe several years along the development path, and less than 90% complete, they will probably be fully functional (and reasonable safe as a human replacement) in something longer than another 10 years. The mythical man month provides hard limits on progressing faster.

How many more people will die … a lot more than either Uber or Tesla project.

And there is the Golden Handcuffs problem … these engineers are accepting high industry salaries for saying yes they can solve the problems … but are they ethical enough to walk away before killing more people and kids?

In the article above, all three pictures are presented with wire frames of recognition against a FALSE high resolution photo, which has the subtle impact of giving the reader a FALSE sense of the resolution that senor has. In reality, LIDAR isn’t color, and the resolution is a tiny fraction of the background image.

This hides the real problem … present the LIDAR imatge as a grey scale where depth is brightness, with the real effective pixel size. It becomes really clear quickly just how difficult this identification problem becomes when looking at 2-5 pixel blobs … or even single pixel blobs with the background noise.

Further more show the real noise variations of the pixels as a noise floor graph, that in some critical cases leaves the information of an object, well inside the noise level. IE a small child where the background behind the child is less then 1% of the child’s actual distance.