It’s been long that simulation has become mainstream and an important product testing tool for manufacturers.

With lot of perks to offer, computer aided engineering is now an integral part of the product design process for engineers. Simulation has helped manufacturers in bringing down the product development time and associated costs dramatically.

It is a tool that helps predict flaws in the design early as well as provide engineers the room to implement innovative ideas directly on virtual product model.

At present, simulation tools are capable enough to predict the product behavior with good amount of accuracy, provided that all the boundary conditions are applied carefully.

As these tools become more advanced in the coming years, there remains a vital question on the usage of physical tests that are considered at present as a gold standard and a means to validate simulation results.

Can simulation tools replace physical tests completely in future? Would it be possible that these tools will mature enough to understand and respond to any real world conditions accurately?

If it is possible, what are the barriers that restrict simulation from becoming gold standard for product testing?

Barriers to Completely Rely on Simulation

Computer simulations provide results based on solving set of mathematical equations that define the physical system under consideration.

As such, the results of the simulation are purely based on what goes in for the calculation. Thus, obtaining meaningful results through simulation is purely based on user inputs, which itself require a great amount of experience and expertise.

However, there are many phenomena that can be defined accurately through equations, which physicists apply for simulation based on experimental data and try to validate the results.

Any change in the design or input parameter can change the results, which would again require validation. If the results obtained through simulation fail, they are required to be studied again experimentally.

It is thus the interplay between experiments and simulations that keep on refining the models and make more accurate.

So then, for models that have been developed using number of experimental validations, it is possible to eliminate physical tests completely.

However, as new materials are being increasingly tested to replace conventional ones, it is difficult to predict their behavior under number of loading and boundary conditions, as there is no prior historical knowledge about the material.

Also, the accuracy of simulation rely heavily on computational capability; the more the complexity of the design, the more difficult and time consuming it is to get accurate results through simulation.

In addition, the variability in material will further increase the complexity in predicting accurate product behavior.”

Future Possibilities

It is evident that the accuracy of simulation tools can only be ascertained when these tools are verified and validated with physical tests.

This directly implies that physical tests will be required at some point in the design process, even when the simulation tools are sufficiently advanced.

However, there is a possibility to certify existing models to the extent that physical tests can be completely eliminated. This requires advancements in two major fields – development of systematic maturity model for simulation tools and transformation to exascale computing.

Development of Systematic Maturity Model

At present, the study on composite material development is mainly carried out through number of physical experiments.

These experiments can however be reduced considerably through simulation, but would require computational models that comprehensively integrate composite material behavior from their birth in manufacturing to their lifetime prediction.

Efforts in developing such models can eliminate thousands of physical tests required for material certification, and would significantly help in improving manufacturing performance.

In 2013, Purdue took a step further in this direction and established a platform for composites design and manufacturing to evaluate simulation tools for composites.

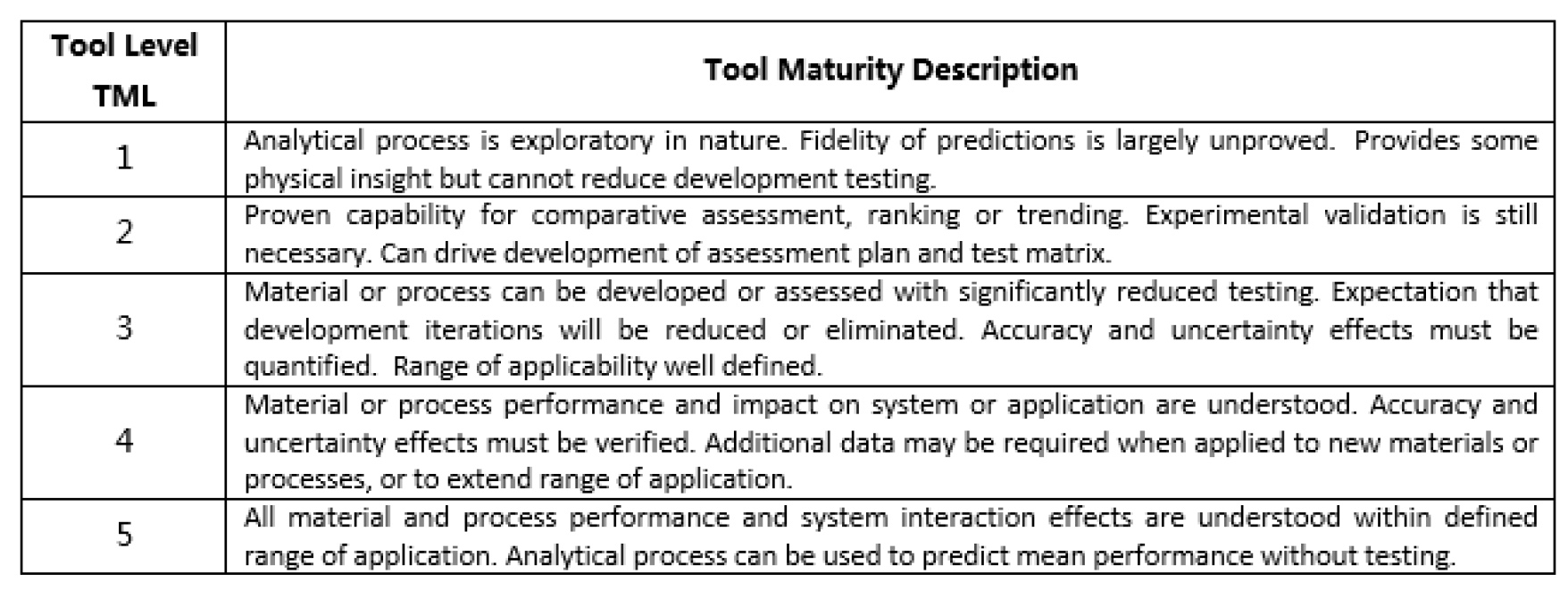

The purpose of this hub is to certify simulation tools, so that no further verification and validation would be necessary. Prior to certification of simulation tools for composites, their assessment is required, which is performed using the Tool Maturity Level (TML) as proposed by Cowles, Backman and Dutton. The TML is defined at 5 levels as shown in the table below:

The TML assessment can thus act as a gated process that ensures all the criteria for a certified simulation model is met at each level.

At level 5, the model can be considered mature enough to carry out simulation without the need of verification & validation.

An effort in this direction in future to keep on refining the model can be highly advantageous, and a step further to reduce physical tests or in many cases eliminate them completely.

Apparently, one of the best examples of fully relying on simulation results is the newly developed Jaguar XE, the first car to move into production without a prototype build for aerodynamic testing.

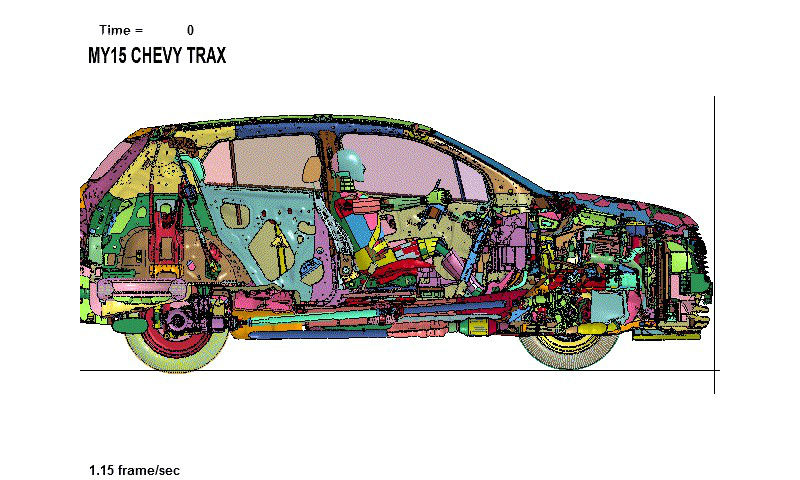

With an aerodynamic drag Cd rating of 0.26, it is the most efficient drag Jaguar has ever developed and that too using software. GM’s engineers also utilize simulated crashes to build safe cars.

However, they do perform physical validations, but they have managed well to maintain the accuracy between the on-screen results and physical tests.

Source: http://www.wired.com/2015/04/gms-using-simulated-crashes-build-safer-cars/

Exascale Computing

Increase in computational power is another factor that leads to the possibility of eliminating physical tests in many cases.

There are several computational problems that can be solved completely, provided there is enough computational power. Example could be the turbulence in a finite domain (such as in a cup of coffee or inside the jet engine combustion chamber).

Such problems can be solved completely using sufficiently large calculations. Exascale computing is a step towards this direction. However, this paradigm shift from peta to exa will require huge investments right from hardware to fundamental algorithms, programming models, compilers and application codes.

This means that it is highly important to justify this huge expenditure by identifying critically important areas where exascale computing can be applied.

A report published by U.S. Department of Energy, thoughtfully explains how and why there is a need to apply exascale computing to truly transform computational science into a fully predictive science for real-world systems.

The real world systems are characterized by multiple, interacting physical processes that occur on a wide range of both temporal and spatial scales. With petascale, coupling these calculations is limited.

However, with exascale, the fidelity will increase substantially and will make it possible to transform into predictive science and engineering calculations by applying uncertainty quantification (UQ) to simulate and predict real world conditions.

Exascale machines, expected to have been developed by 2020, will help simulation to become far more realistic in many domains. It will be easier then to measure wear in materials more accurately or explore all the possible aerodynamic shapes of aircraft wings, compare them and choose the most efficient and safest one.

Closure

It is still too early to predict the replacement of physical tests with simulation; yet, it is evident that the future of computational science is about to see a revolutionary change.

Physical tests though won’t eliminate completely, will reduce in numbers dramatically as simulation models will continue to evolve and become mature to understand real-world conditions more accurately.

With exascale computing, the opportunity exists to perform many computational problems more accurately as compared to today’s petascale computing.

It is however, in the interest of mankind to realize that physical tests have their own importance; “Seeing is believing” in cases where human life is involved, and it is always good to have at least a final physical test before trusting the product design.

What are your opinions?

Filed Under: Rapid prototyping