NVIDIA Jetson AGX Xavier Module (Credit: NVIDIA)

NVIDIA announced at its Jetson Developer Meetup the Jetson AGX Xavier Module for autonomous machines, delivering up to 32 TeraOPs (TOPS) of accelerated computing capability. The NVIDIA Jetson AGX Xavier Module, which the company calls an AI computer, is now available for $1,099 (based on 1,000 unit volume).

NVIDIA introduced the Jetson AGX Xavier developer kit in September 2018. Deepu Talla, NVIDIA’s VP and GM of Autonomous Machines, said the difference, of course, “is the developer kit is used for development. Customers interested in going into commercial production buy the module.” He said NVIDIA has 1,800-plus customers using Jetson for commercially-produced products.

NVIDIA also introduced the Jetson TX2 4GB ($299 in 1,000 unit volume). Other models include the Jetson TX2 8GB and the Jetson TX2 Industrial. “A lot of our customers wanted to use the TX2, but they wanted a lower price point,” Talla said. He added that NVIDIA has more than 200,000 Jetson developers worldwide, which is about five times the number that it had in the spring of 2017.

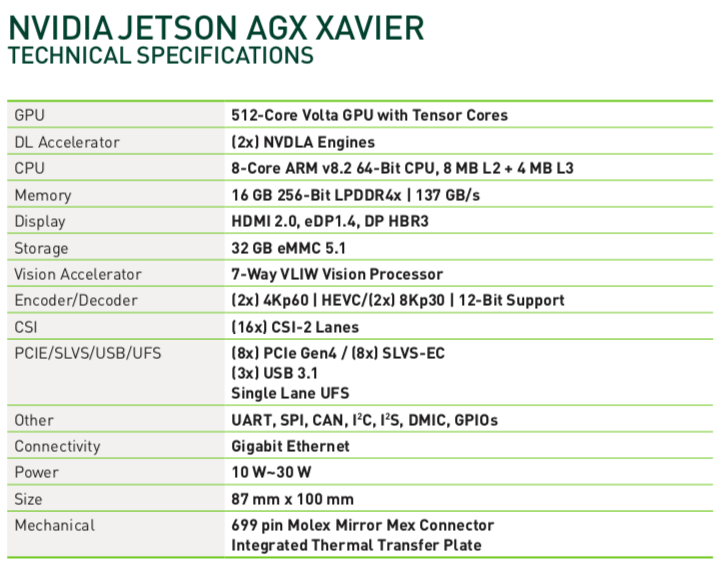

Talla said the Jetson AGX Xavier Module offers more than 20 times the performance and 10 times more energy efficiency than its predecessor, the NVIDIA Jetson TX2. The NVIDIA Jetson AGX Xavier module also offers 750Gbps of high-speed I/O in a compact 100x87mm form-factor. Users can configure operating modes at 10W, 15W, and 30W as needed.

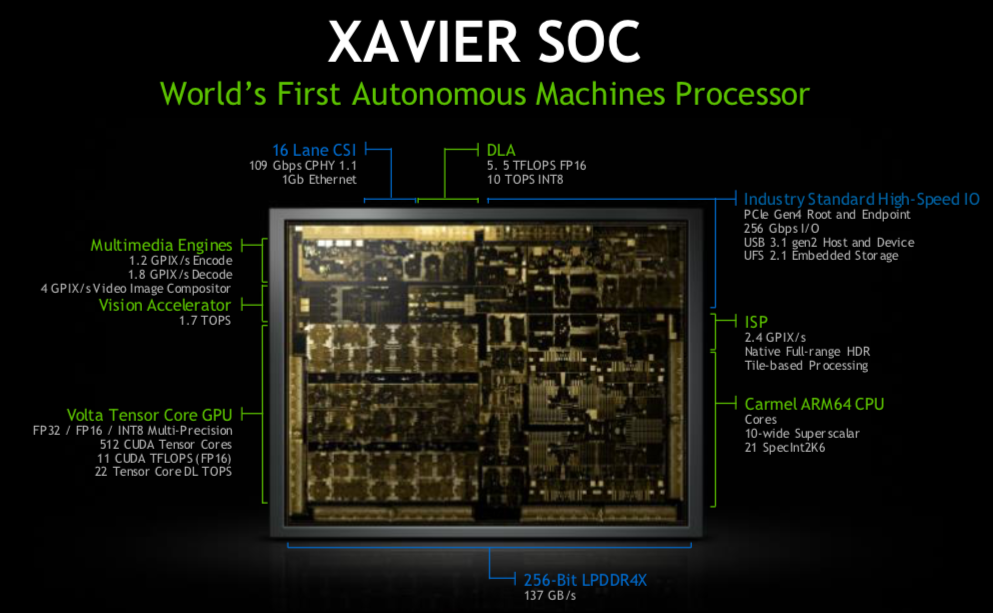

The NVIDIA Jetson AGX Xavier Module can handle visual odometry, sensor fusion, localization and mapping, obstacle detection, and path planning algorithms. Like its predecessors, the NVIDIA Jetson AGX Xavier Module uses a System-on-Module (SoM) paradigm. All the processing is contained onboard and high-speed I/O lives on a breakout carrier or enclosure that’s provided through a high-density board-to-board connector.

NVIDIA’s Jetson Meetup at its Endeavor headquarters in Santa Clara, Calif. hosted 500-plus developers and 30-plus partners.

The platform comprises an integrated 512-core NVIDIA Volta GPU, including 64 Tensor Cores, 8-core NVIDIA Carmel ARMv8.2 64-bit CPU, 16GB 256-bit LPDDR4x, dual NVIDIA Deep Learning Accelerator (DLA) engines, NVIDIA Vision Accelerator engine, HD video codecs, 128Gbps of dedicated camera ingest, and 16 lanes of PCIe Gen 4 expansion. Memory bandwidth over the 256-bit interface weighs in at 137GB/s, while the DLA engines offload inferencing of Deep Neural Networks.

The NVIDIA Jetson AGX Xavier is supported by NVIDIA’s JetPack, which includes a board support package (BSP), an Ubuntu Linux OS, NVIDIA CUDA, cuDNN, and TensorRTTM software libraries for deep learning, computer vision, GPU computing, multimedia processing, and more. It’s also supported by the NVIDIA DeepStream SDK, a toolkit for real-time situational awareness through intelligent video analytics (IVA).

Robots using Jetson platform

The Jetson Meetup was the largest in NVIDIA’s history, attracting 500-plus developers and 30-plus partners that use the Jetson platform. Many of the partners on hand were robotics companies, including Brain Corp, Marble, Skydio, Smart Ag and more. Some of the companies not on hand that use Jetson AGX Xavier for robotics include Cainiao, which is the logistics arm of Alibaba, Denso, Fanuc, JD, Komatsu and more.

Starship Technologies, which wrote a great piece detailing how neural networks help its delivery robots, uses an earlier version of Jetson. Chinese startup Syrius Robotics, which is building autonomous mobile robots for JD.com, uses the NVIDIA Jetson TX2.

Ames, Iowa-based Smart Ag turns farm equipment into autonomous machines, in part by using the NVIDIA Jetson AGX Xavier. Think Brain Corp., but on the farm, not retail stores. The primary goal was safety, to not run over people on the farm, but as they integrated the system and expanded the training on the model, new applications popped up.

“Once we can perceive the environment, it opens up a lot of possibilities for us outside of the safety space,” said Mark Barglof, chief technology officer at Smart Ag. “We can identify standing crops, identify different vehicle types, identify terrain features and more.”

NVIDIA discussed how mobile delivery robots use the Jetson AGX Xavier for autonomous navigation. These types of robots typically have several HD cameras for 360-degree vision, LiDAR and other sensors that are fused together. Talla said mobile delivery robots are essentially a “slower self-driving car.” They don’t travel at the same speeds, of course, but they operate in dynamic environments and require higher levels of computing power.

NVIDIA said a forward-facing stereo driving camera is often used, needing pre-processing and stereo depth mapping. NVIDIA has created Stereo DNN models to support this. Example AI processing pipeline of an autonomous delivery and logistics robot Object detection models like SSD or Faster-RCNN and feature-based tracking typically inform obstacle avoidance of pedestrians, vehicles, and landmarks.

NVIDIA said the Jetson AGX Xavier, with its smaller footprint and power efficiency, is “ideal for deploying advanced AI and computer vision to the edge, enabling robotic platforms in the field with workstation-level performance and the ability to operate fully autonomously without relying on human intervention and cloud connectivity.”

Filed Under: The Robot Report, Robotics • robotic grippers • end effectors

Tell Us What You Think!